What is a web spider and how does it work in SEO?

When you browse the internet, it’s easy to forget the complexity behind every website you visit.

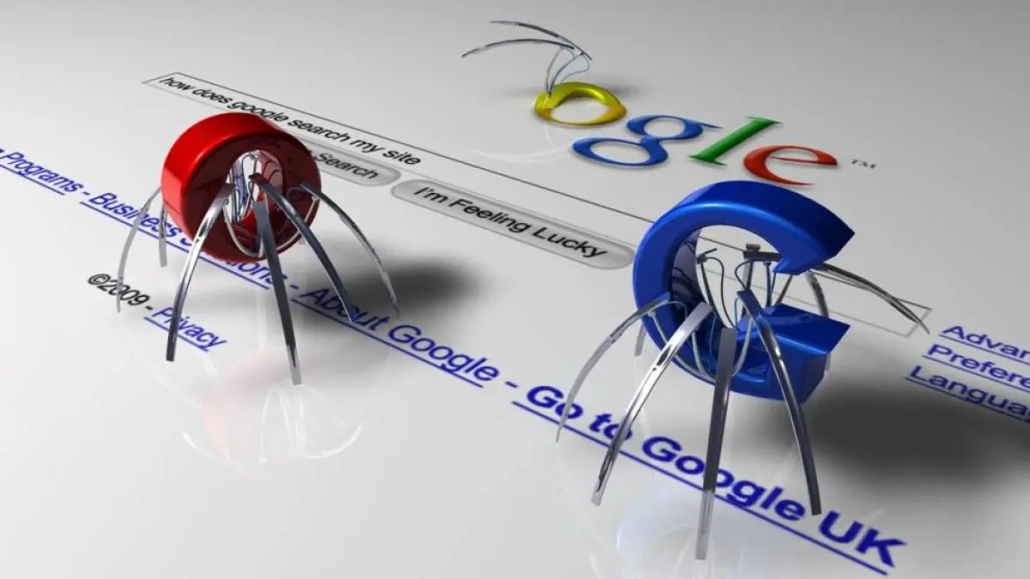

One of the most fundamental tools in this vast digital ecosystem is the web spider.

If you are involved in digital marketing or simply interested in understanding how search engines like Google and Bing index websites thanks to their algorithms, this article is for you.

Let’s explore what a web spider is, how it works, and why it is crucial for SEO.

What is a Web Spider?

A web spider, also known as a web crawler, crawler, or simply bot, is a computer program designed to navigate the web automatically and systematically.

Its main function is to crawl and index content on the World Wide Web.

These bots are essential for search engines as they allow them to gather information about web pages and build a web index.

How Do Web Spiders Work?

Web spiders start their work by visiting an initial set of URLs, known as seeds.

From these URLs, they automatically crawl the hyperlinks found on each page to discover new URLs.

This process continues recursively, allowing spiders to crawl and index a vast amount of information in a short period.

The basic functioning of a web spider includes the following steps:

- Starting at a seed URL: The spider begins its journey from one or more predefined URLs.

- Link discovery: While navigating a page, the spider detects and follows internal and external links.

- Indexing: The information found on each page is stored in a database for subsequent processing.

- Robots exclusion: Before crawling a site, the spider checks the robots.txt file to identify pages that should not be crawled.

- Updating: Spiders periodically return to already indexed pages to detect changes and update the information.

Subscribe to OnlyNiches.NET starting at just €30/month and begin scaling your website today.

Importance of Web Spiders in SEO

SEO (Search Engine Optimization) relies heavily on web spiders, as they are responsible for indexing the content that will appear in search results.

Here are some reasons why web spiders are essential for SEO:

1. Content Indexing

For a web page to appear in search results, it first needs to be indexed.

Web spiders crawl each page, collect information, and store it in search engine indexes.

This process is crucial for making your content visible in relevant searches.

2. Optimization of Crawl Budget

The crawl budget refers to the number of pages a web spider can and wants to crawl on your site within a given period.

Optimizing this budget is essential to ensure that the most important pages are crawled more frequently.

Factors such as site load and performance can influence how and how many pages are crawled.

3. Content Update

Web spiders not only crawl new pages but also return to already indexed pages to detect changes.

This is vital for keeping the content in the search engine index updated, ensuring that users receive the most relevant and recent information.

4. Error Identification

Web spiders can detect URLs that display error messages, broken links, and other issues that could affect SEO ranking.

Identifying and correcting these errors helps improve user experience and the visibility of your website.

Types of Web Spiders

There are various types of web spiders, each with a specific purpose.

Here are some of the most common types:

1. Search Engine Spiders

These are the most well-known spiders, used by search engines like Google (Googlebot) and Bing to index web content.

Their main objective is to collect data about web pages to build an index that enables the display of relevant search results.

2. Site-Specific Spiders

Some websites use internal spiders to crawl and update their own content.

This is common in large e-commerce sites that need to keep their product databases up to date.

3. Research Spiders

These bots are used by researchers to collect specific data from the web.

They can be employed in market studies, competitor analysis, and other research activities.

How to Optimize Your Site for Web Spiders

To ensure that web spiders can efficiently crawl and index your site, it’s important to follow certain optimization practices.

Here are some key tips:

1. Create and Maintain a Robots.txt File

The robots.txt file is essential for guiding web spiders on which pages should and should not be crawled.

Make sure to configure it correctly to avoid accidentally excluding important pages.

2. Optimize Internal Link Structure

A well-organized internal link structure makes it easier for web spiders to discover and crawl all important pages on your site.

Use relevant internal links and ensure that each important page is just a few clicks away from the main page.

3. Improve Load Speed

Your site’s load speed affects how web spiders perceive it.

A fast-loading site will be crawled more efficiently.

Optimize images, use caching, and minimize the use of heavy scripts to improve speed.

4. Update Content Regularly

Web spiders return to pages to detect updates.

Keep your content fresh and updated to ensure search engines index the most recent information.

5. Use XML Sitemaps

XML sitemaps are files that list all the URLs on your website and provide additional information about each one, such as the last time it was updated.

Submitting a sitemap to tools like Google Search Console can help web spiders crawl and index your site more efficiently.

Subscribe to OnlyNiches.NET starting at just €30/month and begin scaling your website today.

Challenges and Limitations of Web Spiders

Although web spiders are extremely useful, they also face certain challenges and limitations:

1. Dynamic Content and AJAX

Web spiders can have difficulty crawling content generated dynamically through technologies like AJAX.

It’s important to ensure that this type of content is accessible in some way to crawlers.

2. Limited Crawl Budget

Search engines assign a limited crawl budget to each website.

This means that not all pages will be crawled with the same frequency. Optimizing your site for efficient crawling is crucial to maximize this budget.

3. Unintentional Blocking of Important Pages

A poorly configured robots.txt file can accidentally block important pages, negatively affecting your SEO ranking.

Regularly review this file to avoid errors.

Conclusion

Web spiders are an integral part of the digital ecosystem, allowing search engines to crawl and index billions of web pages.

Understanding how they work and how to optimize your site for them is essential for improving your SEO and ensuring your content is visible in search results.

From creating an appropriate robots.txt file to optimizing internal link structure and improving load speed, every aspect counts.

Stay up to date with the best SEO practices and ensure your site is always ready for web spider visits.

We hope this article has helped you better understand the work of web spiders and their importance in SEO.

If you have any questions or want to delve into a specific topic, feel free to leave a comment.

Happy crawling!

Eduardo Medina

Eduardo Medina es programador y SEO, con más de 20 años de experiencia en ambos campos. Desde 2024 escribe post para OnlyNiches.NET en el que enseña a los usuarios a posicionar su web y su marca en los motores de búsqueda y redes sociales. En un mundo tan cambiante, hay que estar siempre aprendiendo y reinventándose.

About Us

OnlyNiches.NET is owned by the Spanish company SELKIRKI SIGLO XXI SL with VAT ID B90262122. All rights reserved.

This application respects your privacy and follows the guidelines of the Google API Services User Data Policy. We commit to adhering to the limited use requirements, ensuring safe and responsible handling of your data. For more information, visit Google API Services User Data Policy.

Leave a Reply

Want to join the discussion?Feel free to contribute!